So this part on anti-consumer is a fundamental misunderstanding of the tech implementing SAM (Smart Access Memory). This is why I am excited for PCIe 5.0 and Intel's CXL!

Let me back up. AMD created a pre-cursor to CXL on PCIe 4.0 PHY. This is what allows for heterogeneous memory usage where the CPU can instead of using and having to duplicate in system memory just use the GPU memory on the card. AMD also is doing direct SSD access for loading into GPU memory. Intel's CXL goes beyond this to cache coherency and access across devices, which is why you need both CXL on PCIe 5.0, which AMD signed onto, and Gen Z PCIe for internode connections that are going to be revolutionary. But that is getting into server talk. Back to consumers.

AMD took their non-proprietary, open, standard for PCIe 4.0 and implemented it in their products. I was not expecting to see this consumer side this quickly (which means Nvidia and Intel will BOTH have to look at adoption of this moving forward or guarantees consumer implementation of CXL on PCIe 5.0 in the future OR could cause Intel and Nvidia to adopt, to a degree, AMD's way of doing it on PCIe 4.0, thereby allowing that to impact how CXL will be implemented in the future). This standard was known to Nvidia, and although they did not have a Zen 3 chip to optimize and implement (because not out yet), they could have asked AMD how to implement it for server side, then put that in their desktop cards without knowing if any hardware would ever support it, or ask Intel to implement AMD's solution for PCIe 4.0.

Either way, since Intel never really put out a PCIe 4.0 product, all product development has been done on AMD CPUs. In fact, AMD and Nvidia have worked together for some server offerings. So this is NOT anti-consumer. In fact, this is forcing movement of cutting edge server tech that has barely been even implemented in servers to the consumer. Look up the whitepapers on it from 2018.

AMD never restricted the implementation of this from Nvidia NOR Intel.

Edit: Found the name again: CCIX (Cache Coherent Interconnect for Accelerators; https://en.wikipedia.org/wiki/Cache_coherent_interconnect_for_accelerators). That is a known project and likely is the basis for the entire SAM implementation, as well as could work with Intel's CXL implementation.

Yes, that will be fun to see the back and forth. And then next gen, with this shot across Nvidia's bow, things can get REAL GOOD! It is like how the 290x from AMD competing caused Maxwell to drop. So definitely exciting!!!

Turns out, RT in WoW is DXR, which AMD directly supports Direct X Raytracing (DXR). From rumors, the DXR is similar to Turing's, or slightly better, but still lags Ampere.

-

-

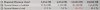

AMD Ray Accelerator appear to be slower than NVIDIA RT Core in this DXR (Ray Tracing) benchmark videocardz.com

Convel, Rage Set, Robbo99999 and 3 others like this. -

For more information on CCIX:

https://semiengineering.com/knowled...-coherent-interconnect-for-accelerators-ccix/

"CCIX creates a system from connected components, with all of them having native access to the same memory. If you were to have designed accelerators that were on a single piece of silicon, all of the accelerators would have had access to all of the resources of the SoC. CCIX is attempting to enable the same functionality at the system level.

By bringing the notion of cache coherence between the CPU and accelerator, CCIX bypasses much of the overhead. Data structures can be created in memory, and a pointer to it sent to the accelerator. The accelerator can crunch on the data immediately, possibly making local copies of data only when it actually needs it. It is also possible that the data is being continuously updated."

https://www.electronicdesign.com/in...-cache-coherent-interconnect-for-accelerators

"The standard works with PCIe switches and in conjunction with the PCI Express protocol to provide a high-bandwidth, low-latency interconnect."

"CCIX has just been announced, so hardware is in the future. It also means that processors will need to support it to take advantage of accelerators or even memory. More on memory later."

"So why not use those connections to attach accelerators?

Most, probably all, of the multiprocessor cache interconnects are proprietary and tuned for the processors involved. It would be possible to attach additional devices, but then the support would be dedicated to a particular vendor. PCIe is a common interconnect on these systems and its switch-based expansion is ideal for supporting multiple CCIX accelerators. The CCIX architecture builds on this (Fig. 2)."

"A CCIX accelerator supports both the CCIX transaction layer and the PCIe transaction layer. The latter is used for discovery and configuration of the accelerator, while the CCIX side handles the memory transactions. This allows standard PCIe support to be used to negotiate the speed and width of the PCIe connection. The PCIe side can also be employed to configure the accelerator."

There is a lot more in that second article, but you get the point. The SAM sounds like and implementation of CCIX. CXL from Intel will be able to do the same AND MORE! So Intel isn't behind and has their own open implementation in the future coming. Nvidia is able to access both CCIX and CXL, so them choosing not to is not anti-competitive nor anti-consumer.

Edit: "The system essentially implements the heterogeneous system architecture (HSA) found in AMD’s Accelerated Processing Unit (APU) that combines its CPUs and GPUs into a common memory architecture. The difference is that CCIX is on built on an open standard, PCIe. There are numerous HSA implementations, but they are proprietary. HSA provides a common memory access environment like CCIX."

"CCIX will likely have a significant effect on future GPU architectures as well as machine learning. This connection will allow vendors to deliver CCIX-compatible devices that can be used by any host that supports CCIX. Configurations will vary from boards that contain both the host and with one or more CCIX devices to expansion systems that already utilized PCIe. In fact, only the host and CCIX devices need to know about the CCIX protocol, since it’s transparent to any cabling or PCIe switches within the system."Last edited: Oct 28, 2020cfe likes this. -

A couple extra things to note on that:

1) clocks were not final in August, although the silicon should have been fairly final, so it may change a couple percent from that, and

2) The 2080 Ti is not there for comparison.

Why did I bring up the second point? Because we all know that AMD's first time out WILL NOT be as good at raytracing as Nvidia's second generation. PERIOD! No reason to debate it.

But, understanding how it stands up against a 2080 Ti makes a huge difference in perception of the performance. If it got spanked by both Ampere AND Turing, then if RT is the deciding factor for you, it absolutely tells you what product to buy. If it is above a 2080 Ti and good enough in actual games, then other factors come in.

Awesome find and thanks for posting it! -

@Papusan

I just wiped the raid 0, set it up again and I am reinstalling windows.

I tried downloading that Windows process explorer application, but as soon as I would launch it those cores would go to 0% usage, just like task manager did. I also disabled automatic system maintenance.

So this install made it about 2 months with good performance.

I notice the smallest of degradation in performance even like 1-2%. But, I couldn’t find what was causing those cores to idle at 50%. So reinstalling is just easier. -

-

The first video you linked of Phoenix, it sounds like he needs to change his pants at exactly 47 seconds in to the video lol.

Watch again and listen lol. Around 46-47 seconds.Last edited: Oct 29, 2020yrekabakery likes this. -

Robbo99999 Notebook Prophet

Had a read of that, not sure I agree with her when she said that you shouldn't really consider an 8C/16T CPU for gaming unless you use it for other stuff. I think if you're building a gaming desktop now then you want the core platform to last as long as possible and maybe last a GPU upgrade too - so if you're talking five years I think it's important to get an 8C/16T CPU.....those CPUs are so common now both in PC & in console that it will be impossible to think those assets won't be taken advantage of by game developers. We were only stagnated on 4C/8T for so long because Intel spent nearly a decade mainly offering 4C CPUs with higher core CPUs being ridiculously expensive and not aimed at the normal consumer market, whilst at the same time AMD had nothing to offer in competition, but that all changed with the AMD Ryzen launch.....so that Ryzen launch was that start of the new surge and that's inevitably starting to seep into the plans of the game developers, there's already games that can take advantage of 8C/16T CPUs and we're not even in the future yet, ha! My next CPU will have a minimum of 8C/16T.Papusan likes this. -

This is a very interesting video! And you are right, the RTX3080 doesn’t gain a whole lot on water cooling even with extra power. And here’s why.

@Robbo99999

I had no idea that the RTX3080 ran a much faster default frequency than a 2080Ti did. I thought it was a slightly faster boost, but not this high of a frequency gap.

I see why overclocking the 2080Ti offers such an improvement now. And not so much with an RTX3080.

This is a excellent! Default vs default FE vs. FE comparison!

Last edited: Oct 30, 2020Robbo99999 likes this. -

Holy Cow... Hello guys.

Yes I'm alive and well haha. Very interesting times, I hope everyone has been well and staying safe. I've been very busy with family, great things despite this crazy pandemic. Life is good... many blessings to be grateful for..

Yes I'm alive and well haha. Very interesting times, I hope everyone has been well and staying safe. I've been very busy with family, great things despite this crazy pandemic. Life is good... many blessings to be grateful for..

I have a lot of reading to catch up on so it'll take a while, however....

I do have a 3080 right in front me and am eager to start having some fun testing it.

-

Robbo99999 Notebook Prophet

Cool, welcome back, let us know what you find and how much you can tweak it! I'm in a pre-order queue for a Asus TUF 3080, God knows when it will arrive though!Rage Set, Papusan, Talon and 1 other person like this. -

Maybe the 3070 will take some of the pressure off.

You know, I truthfully believe that the demand is not nearly as high as we think it is. I think that with all of the bots buying them all up, as soon as inventory is available. That they directly control the market. So, it’s almost like they have created a false sense of demand. And really they own all of the inventory just to sell for profits.Rage Set likes this. -

Do we have any dates on for AMD reviews for CPU/GPU?

-

Robbo99999 Notebook Prophet

Yeah, 3070 will take a bit of the demand away from the 3080, so will the AMD 6000 series launch. I'm 900 and something in the queue for a 3080 this week (they send weekly updates to your queue position), initially I was at 1300 something, and they're all just cancellations because they actually haven't shipped any non OC TUF 3080's, it's just the OC version that is going out so far (at least from the place I bought mine - overclockers.co.uk), so 3070 & AMD 6000 series launch is just gonna create more people jumping ship....fine by me!

I guess the bots do increase the demand beyond what would otherwise occur, and certainly within the small time frame of the initial launch, but I think demand would be high for this NVidia launch because 3000 series is just a big step up after a disappointing 2000 series, lots of folks decided to hold onto their Pascal 1000 series cards due to the disappointing 2000 series and also lack of ray tracing games, and that's changing now.electrosoft and Papusan like this. -

-

I am curious about that myself. EVGA only uses the top tier chip from Nvidia to develop their KP cards. In my opinion, they are well worth the extra money for the features/functionality. However, a 12GB 3080 TI would make the KP edition of the 3090 a nonstarter, for me at least. I am thinking about skipping Nvidia completely this generation and going only with a 6900XT. Nvidia appears to be very reactive to AMD and that means the Supers are going to come sooner than we expect.ajc9988 likes this.

-

electrosoft Perpetualist Matrixist

How NOT to apply Liquid Metal.....holy canon blaster batman....

-

So, my 2080Ti at 2.1Ghz is within margin of error of a stock RTX3080 FE performance. I don’t see a reasonable option to get faster performance right now whatsoever. Even a RTX3090 would perform 15% faster and cost $1,500 dollars.

I think we’re are gonna see a 3080Ti very very soon. And this is exactly what I am gonna hold out for.

Nvidia has no problem cannibalizing their highest tier GPU option “a 3090”, for something a little faster for less money to maintain there “Fastest Gaming Card” title.

The 3080Ti could even be like a 20GB video card. That would be really nice.Last edited: Oct 31, 2020electrosoft and ajc9988 like this. -

electrosoft Perpetualist Matrixist

GPUs are forever a game of diminishing returns. Performance rarely, if ever, scales linearly with price. Always have been, always will be.

Pick your criteria, pull the trigger, and keep it moving.

-

LMAO..."This gentleman is unfortunately, probably screwed" Seeing that, I really want to slap his customer. I mean, badly. His customer had enough knowledge to disassemble the computer. Enough knowledge to research LM and know its fantastic properties. Yet doesn't have the knowledge to know where and how to apply LM properly. ....................

-

To start, more info on SAM. According to LTT WAN show, there is a chance that SAM is similar to Microsoft Resizeable BAR Support. I am not fully sold this is what it is, but another theory of the case and wanted to share it.

https://docs.microsoft.com/en-us/windows-hardware/drivers/display/resizable-bar-support

Some have rumored that is why the 16GB and 20GB variants are not coming, why the 3070 Ti steps up from the A104 die to the A102 die as a cut down variant from the 3080, while the 3080 Ti will be a cut down variant of 102, like everything else. That means Nvidia is producing their Quadro lineup of the Ampere Quadro 6000 being the full A102, the 3090 (2% cut down), the 3080 Ti (9% cut down), the 3080 (like 17% cut down), and the 3070 Ti (forgot the core count, but likely another 7-10% cut down, so like 25% cut down give or take a couple percent) all on the A102 die. Considering the 100 die hasn't been used for GPUs for consumers since around the 700 series (or if not counting the 110 die variants, like the 400 or 500 flagships), this means AMD hitting back on Big Navi struck some fear into Nvidia. That is GREEAAAAT! (said in the Tony the Tiger voice)

I'm personally coming from a 980 Ti, buying around the 3080/6800XT level, which makes me want to see pricing on the 3080 Ti and if they will price that at the 6900 XT level, or will try to undercut it. If they are positioning that around the $1K price, I'm going to pass on it. If around $800, I'd jump on it, and at $900, I would likely pass, but that is DAMN good value relative to recent pricing. Like whoa value.

But, with the rumored lower ram, I really can see that having some effect depending on the game, even at 12GB, although nowhere near as noticeable as the 10GB hit that the 3080 takes in some games.

Edit: coming from a 980 Ti, that is nearly tripling my performance, so 3080 or 6800 XT is good either way.

If they do 20GB instead of 12GB, that would be nice, but they likely were not planning on having to do a less cut down die, to be honest. Now, whether or not it will be faster than the 3090 is purely a question of whether the boost is higher with the extra 7% of cuda cores cut off. If the boost cannot overcome the cut down on the cuda cores, it won't be faster than the 3090 for less. The way the Ti used to work is you would cut down the full dies less, but more than a titan, and the cut down, along with driver optimizations and allowing for higher boost due to lower localized heat due to fewer shaders would then outperform on gaming. I'm not sure this is going to be as effective doing that as Nvidia already has so little OC headroom on Ampere, it is an energy guzzler, and so it might just sit right under the 3090, albeit at about 2/3 the price (so still a much better bargain).

Also, here is some info on RT with DLSS on and off on Ampere compared to the RX 6800. It may be from WCCFTech, but it seems not to be rumor but compared numbers. It shows RT with DLSS on the 2080 Ti and 3070 beat the 6800, while they both lose with just RT and no DLSS.

https://wccftech.com/amd-radeon-rx-6800-rdna-2-graphics-card-ray-tracing-dxr-benchmarks-leak-out/ -

What hit? I game at 4K and not a single game has been limited by 10gb lol. Even the hilariously unoptimized watch dogs legion at 4K Ultra with Ultra ray tracing maxes at 9gb and that isn’t vram used. It’s vram requested.

I agree that 10gb should have been 12 or 20gb for marketing, but right now it’s a non issue. By the time it really matters it will be time for an upgrade as the GPU won’t keep up anyways. -

When the 1080Ti came out it was fully unlocked with 3,840 Cuda cores. More Cuda cores than the Titan X Pascal.

So the 3080Ti will probably be a fully fledged Ampere die. Nvidia has done it before, because Titan X pascal owners were pretty upset, that the 1080Ti would outperform their “Workstation Titan GPU”. Theirs not much left anyways lol.

I hope it’s $849-$899 price range and Uber fast. 12GB Vram is plenty for me. I’m very happy with the 11GB on my 2080Ti. I’ve never ran in to an issue either.

Honestly if the 3080Ti was $1,199 I’d probably still buy one. Anyways I’m just speculating. -

liquid metal is amazing. But there is a huge learning curve involved with it. I’ve seen so many people set it, and forget it type application. And, it just doesn’t work that way.. After the first application, that laptop would be coming right back apart anyways for the 2nd application lol.

I am not really sure who applied the LM inside that MacBook lol. But, I have seen people apply it like thermal paste and just reassemble laptops. Crazy.

Once it’s done right, it is amazing how long it actually does last though. -

-

There are some games that showed much lower performance, sometimes being only a couple percent over the 2080 Ti, while with the 3070, you see it lose significantly to the 2080 Ti. You pick ONE GAME. ONE. Then act like that is representative when we know some games fill up the 11GB of the 2080 Ti already.

Now, I'll agree, it generally is edge cases at this moment in time. We both agree there. Because the number of titles are currently extremely limited that hit that barrier on VRAM. But that may change in the coming years, especially with AMD competing at the high end and 16GB being given all the way down to a 3070 competitor, with the 3070 only having 8GB, something low end 580s had years ago.(edit: Also, both consoles have 16GB of VRAM available, meaning that Nvidia is the limiting factor on ports, hilariously enough, whereas AMD's Big Navi cards with the 6900 XT and 6800 XT and 6800 will all support that much. Who knows the VRAM on the upcoming 6700 XT which uses Navi 22 and has 40 CUs instead of the 80 CU big brother, along with the 32CU Navi 23).

Nvidia screwed up there. It is plain and simple. They were greedy.

So, two things: 1) You are wrong that the 1080 Ti had more cuda cores than the Titan X Pascal, and it also had fewer cores than the Titan Xp, and 2) this is why looking to Quadros informs of the full die size, generally.

So, let's start with the core counts of the Titan X pascal and the 1080 Ti, then move to the second. Titan X pascal had 3584 shaders or cuda cores. That was NOT the full die. The 1080 Ti had 3584 shaders or cuda cores. That looks like the same number of cores to me.

https://www.techpowerup.com/gpu-specs/geforce-gtx-1080-ti.c2877

https://www.techpowerup.com/gpu-specs/titan-x-pascal.c2863

In fact, the 1080 Ti also cut the number of ROPs down from 96 to 88 for the 1080 Ti, along with going from 12GB to 11GB of VRAM and cutting the memory bus from 384-bit to 352-bit bus.

Now, what is the difference between those two cards then? Well, part of it is frequency of both the Cuda Cores and the memory. The Titan X Pascal had a base clock of 1417 MHz, a boost clock of 1531 MHz, and a memory clock of 1251 MHz which gave a memory bandwidth of 480.4 GB/s. The 1080 Ti had a base clock of 1481 MHz, a boost clock of 1582 MHz, and a memory clock of 1376 MHz which gave a memory bandwidth of 484.4 GB/s. So, even though it had less VRAM, it was clocked higher and had more memory bandwidth and faster memory, going from an effective 10Gbps to 11Gbps, thereby giving better performance. It's almost like how people overclock their cards to get more performance. Who would have thought higher clock speeds and faster memory would increase performance?

To be clear, TechPowerUp has the difference in performance between the two cards at 3%. That's it. Just 3%. Which makes sense with only a 50MHz boost and 4GBps memory bandwidth, while nothing outside of professional workloads could have taken advantage of that extra GB of memory at the time, although I remember AI training COULD and DID! But that was pre-tensor core Volta cards. And any extra difference between the cards can be accounted for in the driver optimizations for each line, which geforce cards are optimized for gaming whereas Titan cards have extra optimizations for professional workloads.

Let's now move onto the Titan Xp and the Quadro P6000 cards. The Titan Xp had 3840 shaders, while the P6000 had 3840 shaders. The P6000 had double the memory, at 24GB, whereas the Titan only had 12GB. The Titan Xp had a base clock of 1405 MHz, a boost clock of 1582 MHz (like the Titan X Pascal), and a memory clock of 1426 MHz which gave a memory bandwidth of 547.6 GB/s. The Quadro P6000 had a base clock of 1506 MHz, a boost clock of 1645 MHz, and a memory clock of 1127 MHz which gave a memory bandwidth of 432.8 GB/s. They likely gave the Quadro extra core clock to make up for the slow memory speed from getting ram with 2GB instead of 1GB per chip, which ran slower.

This brings me to my second point, that you look to the Quadro 6000 series to look for the non-cut-down variant of the 102 die. They charge a premium for these cards and so pick the choicest silicon off the wafers with no defects. So if you ever want to know what the full die size is, you instantly go to the Quadro 6000 entry to understand how many shaders is on the card.

If you look at the RTX 6000, there were 4608 shaders. Same on the RTX Titan. The 2080 Ti had 4352, or about 94% of the total cuda cores on a TU102 die. The RTX Titan had a higher boost clock, higher memory bandwidth, and more than double the memory of the 2080 Ti. In fact, there was no cut down between the Quadro and the RTX Titan this year, similar to the Titan Xp, whereas they DID cut the die down to make the Titan X Pascal line. Almost like Nvidia planned on releasing another die a year later to bilk Titan purchasers.

Now, if you jump forward to today, you have to start at the non-cut-down die of the Quadro lineup.

https://www.techpowerup.com/gpu-specs/rtx-a6000.c3686

As you can see, the RTX A6000 Quadro card has a total of 10752 shaders on the GA102 die. That is the full die and full core count. You cannot go above that without jumping to the 100 die manufactured at TSMC, which they haven't released a 100 die to consumers in nearly a decade. In fact, they've been making the 100 variant a high priced commodity, with the V100 costing $10,000 at launch, although the Titan V did get a cut down variant of it with fewer ROPs and less memory and mem bandwidth.

From here, we look at the 3090 core count and the 3080 core count. The 3090 has 10496 shaders and the 3080 has 8704, which is 97.6% and 81% of the full possible shader count, respectively. The 3090 has a base clock of 1395 MHz, a boost clock of 1695 MHz, and a memory clock of 1219 MHz (or 19.5 Gbps effective) which gives a memory bandwidth of 936.2 GB/s. The 3080 has a base clock of 1440 MHz, a boost clock of 1710 MHz, and a memory clock of 1188 MHz (19 Gbps effective) which gave a memory bandwidth of 760.3 GB/s.

And since the boost is higher by one 15MHz stepping, but slower memory and 17% fewer cores than the 3090, but the difference in performance at stock is only 11%, anything you do for a 3080 Ti would have to play in the ground between the two, since the 3090 is practically not cut down (it is by 2.4%, but that is to increase usable yields and would matter very little in the result). So, let's go to the rumored 3070 Ti and 3080 Ti specs.

https://www.techpowerup.com/gpu-specs/nvidia-ga102.g930

So in theory, you are getting the same memory bandwidth as the 3090, but only 12GB of memory on the card, while getting 92.9% of the full GA102 die. That is much more die than the 3080, but is 4.7% less die than the full 3090.

If memory is the same effective speed, without the dual channel, you might be able to clock it higher, while also not having memory on the backside of the PCB to cool, which could be better for OCers, but at stock, you get no benefit. That leaves the question of if the clockspeed will be kept the same as the 3090 or will be increased. If it can clock higher, then the 3080 Ti might add some performance due to extra frequency, but there are likely going to be limits on that.

So you are WRONG that the Ti series has gotten the full die.

Not even the 980 Ti, which got the 200 die (so like a 100 series, but the 800 series maxwell was a poo stain that they made go away because it ran hot and crappy). For the 1080 Ti, I already showed that was a cut down die.

So, automatically committing yourself to buy something is a little absurd, as is over-predicting the specs of the card and its performance. It will be closer in performance to the 3090 than the 3080, but as to beating the 3090, nothing I've seen nor shown here today really suggest that.

As to performance, that leaves the question are you buying it for raytracing or DLSS? On traditional rasterization, it looks like the RX 6900 XT beats it, both on memory size, not so on memory bandwidth, and in raw TFlops. But, once you add in if you are buying for raytracing, then I get your point.

But think of what you said. You just committed to paying a 20% premium over a competing product with more raw horsepower and more VRAM, but with lower performance in still niche use features. You are basically telling them to charge you more and undercutting your leverage as a consumer.

Companies scrape data everywhere they can and crunch their own estimates on performance and pricing to come up with the end price. Them doing absurd pricing on the 2080 Ti and RTX Titan and Titan V are what led to them raising the 3090 to $1500, which for all intents and purposes, that IS where the former Ti slots, NOT the Titan. They then told people the 3080 is the flagship and priced it where the 2080 released at, but was the price of the 1080 Ti. Consumers saying they would pay more year after year is what drove the prices to DOUBLE in a span of 2 generations.

Bit I digress.

In any case, I hope this better informs you. I leave you with the NAVI 21 information, so you can compare shaders, TMUs, ROPs, and clocks. Might even inform you why AMD does better at rasterization. When Nvidia kicks into int/fp mode instead of fp/fp, you basically divide the shaders by 2 to figure out what you are actually getting on that performance.

https://www.techpowerup.com/gpu-specs/amd-navi-21.g923

Edit:

This let's you see where the performance of the RT is before AMD releases their version of super resolution. This is also on their 6800, not the 6800 XT. There is one raytracing core per CU. So the 6800 XT has 20% more RT cores. That likely places is where the 2080 Ti and 3070 are with DLSS on. The super resolution isn't introduced for a month or two after the card is released, which may further scale the performance on the AMD cards. AMD is using Direct X Machine Learning (DXML). Since DXR and DXML are poised for use over the proprietary RTX and DLSS on many games, just because of consoles in part, you may see limits to adoption of Nvidia specific solutions. Their raytracing cores and tensor cores will still be used, however. And we won't know AMD's full RT performance until reviews, then the super resolution effect for a month or two after that.

I just hope AMD has the review embargo lift THE DAY BEFORE THE LAUNCH. And I really think a 6800 XT with a good waterblock may be the way to go this time. It took both Rage and SAM to meet the 3090 on the 6900 XT, so a good mod and OC on a 6800 XT should have me going toe to toe with a stock 6900 XT easily (or their mild OC, as Rage is about 2% and the SAM is like 4 or 6%, so they had a 6% or 8% extra performance going on the 6900 XT, meaning OC the lower card, save $350, put $150 toward a good water block, maybe $50 toward a good backplate, then however much for additional pads for whatever you want to sink beyond that. Or, if Optimus creates a waterblock, that will likely cost $389 or so on that, so you could pick up an optimus with Fujipoly (what they ship with)).

Edit: Aside from 2 times where I put "is" instead of "are," I was wrong on the timeline of 100 series as a Ti (which was shown above) as the 980 Ti was released in 2015. So, just correcting it to about 5 years instead of 10 years, for fairness to Nvidia.Last edited: Nov 1, 2020iunlock, tps3443, Robbo99999 and 2 others like this. -

https://www.techpowerup.com/gpu-specs/nvidia-gp102.g798

https://www.techpowerup.com/gpu-specs/nvidia-tu102.g813

Just to give the 102 die lineup for the Pascal and Turing cards, since Maxwell 200 and Ampere GA102 were shown above in the last post. But since you can only attach so many screenshots/pics, adding this, just for future reference and rebuttals. All of those come from techpowerup GPU spec library.Robbo99999 and Papusan like this. -

I can give you guys a perfect example of why I need more than 11GB of vRAM. I'm currently producing a mini doc for my brother. I filmed the doc in 4K 120/24p with multiple cameras (S1H and A7SIII) and the multiple streams of color graded/FX'ed 4K is kicking the crap out of my single 2080 TI. Perhaps I closed the door on the 3090 too early, hahaha. Or I can search for a deal on a Titan RTX. Either way, I need more vRAM.

electrosoft, ajc9988 and Papusan like this. -

Well, there’s really nothing I can say. It looks like the 3080Ti will be a little disappointing? Maybe Nvidia will refresh it on 7NM manufacturing process to achieve higher boost clocks like AMD is achieving?

A watercooled 2.4Ghz 3080Ti would be nice! These are probably the speeds a 6800XT will hit with unlimited power and low temperatures.ajc9988 likes this. -

Possibly. But, if you can wait for the next generation of Nvidia or RDNA3, I would! I am coming from a 980 Ti, I can wait no longer, but the next gen for both cards is rumored to be chiplets. That means potential ASICs, a lot of low power smaller chips, which helps with yields and silicon quality, a more mature 6nm or 5nm node (AMD lists it as an advanced node, which could be a custom transistor deal with TSMC, 6nm, or 5nm), or just a more mature 7nm (or Samsung 7nm or smaller if yields are up). Either way, if they get it to work right, you could have a significant jump in performance (similar to how Zen jumped up server and HEDT performance, although there is always the risk that the 2nd or 3rd gen is much better).

The ASIC thing is likely where the rumor Nvidia was working on a coprocessor came from, which on server side they were looking at an ARM processor on the PCB with the GPU.

But I do agree, Nvidia may refresh on TSMC. But, to be honest, if it was me, I'd take the hit and try to rush out the gen after Ampere, just to get back on top. Porting over to another fab is time and money you could instead pour into speeding up the next gen. That would be around the time Radeon had the 5000 series and Kepler was here. But, that did inspire Maxwell. -

So you are running a 980Ti? Wow you’re in for a seriously fun upgrade, and literally a super boost!!

How long have you been using a 980Ti? If you keep your GPU’s that long, I’d buy at the absolute top. RTX3090, or 6900XT they’ll only last you that much longer. Even if it’s only 10% faster. 10% is 10% lol.Last edited: Nov 2, 2020 -

Normally I buy one generation old after prices crater. Picked up the 980 Ti for $300 in the run up to the 1080 Ti release. It was decent until pascal was such a jump. But AMD has had NOTHING until now and Turing wasn't that impressive on rasterization and had huge price increases.

So because of that, might as well buy new this round. And considering the 3070 and the 6800 do not impress me much, it makes my recommendation to get a 3080 or a 6800 XT if holding onto it for 4 years.

Another thing to do is grab something better than you have, but cheaper, and wait for chiplets, because that will really start the arms race. This was more an opening shot by AMD to Nvidia. Nvidia's response, if needed, will even possibly give the 100 series back to consumers if that is needed to get back on top! And AMD isn't holding back, so we know they are the scrappy fighter in this scenario that few thought could ever come back (thank those engineers that made zen efficient for being moved over to RTI to fix the GPUs, among others).

Chiplets are why AMD is saying another 50% efficiency like they did this year! So another jump like this possibly next winter or sometime the year after!

And considering Nvidia has to respond for a change, something they haven't had to do in a long while, that is why i lean toward a new architecture pushed up versus the refresh on TSMC. They will want to dominate and crush AMD to win back mind share. Hence why I referred to maxwell, when nvidia really pulled away. Nvidia will try to do that again.

So, with me estimating the real large jump is next gen, not this gen, going all out wouldn't be the best. Selling this card when those are about to drop and buying those are when you go all out!

Either way, the opening Salvo is now and we are about to see a real fight next gen!!!GrandesBollas likes this. -

Well I have sealed my soul.

EVGA Micro 1 X299 and 108980XE both coming today...I hope the Micro 1's 12 phase power delivery is enough for a 10980XE.

Now I just need a 6900 XT or 3090.Robbo99999, Rage Set, tps3443 and 2 others like this. -

You’ll be ok. Plenty of cpu power. You may or may not agree with this on a nice shiny $1,000 dollar processor, but because the 10980XE is soldered and cannot be delidded, I would recommend spinning it on a flat surface to check how flat the IHS is. You may want to consider lapping it on some glass to make it super flat.

You’ll probably manage 4.8Ghz at 1.2V or even less. The silicon seems pretty good compared to my 7980XE.

![[IMG]](images/storyImages/6-DD585-A1-DB06-4-B84-BD66-A4-B3-FF6-EC281.jpg) Last edited: Nov 2, 2020ajc9988 likes this.

Last edited: Nov 2, 2020ajc9988 likes this. -

electrosoft Perpetualist Matrixist

Can't wait to see the MSRP on this bad boy:

https://www.evga.com/articles/01454/kingpin-3090/

-

Falkentyne Notebook Prophet

So my assorted set of 5 groups of shunts per milliohm type came in today. I bought two packages, so I have ten 10 mOhms, ten 15 mOhms, ten 5 mOhms, etc etc. I actually have 3 more identical packages coming from Aliexpress, so that's going to be shunts for life.

- SMD Package 2512, max. 2 Watt

- 10 values, 5 pieces each

- 1, 2, 5, 8, 10, 15, 20, 25, 50 and 100 mOhm

https://www.amazon.com/gp/product/B07B2PM5TV/

https://www.aliexpress.com/item/32368758351.htmlRobbo99999, tps3443, electrosoft and 2 others like this. -

Rumors from some overclockers is that it starts at $2099. This time, stock will be limited unlike the 2080 TI edition, of which they produced more than they normally do.Papusan, ajc9988 and electrosoft like this.

-

Falkentyne Notebook Prophet

Conductive paint: to help attach the shunts to the original shunts for stacking and to ensure a solid connection. I ordered correct paint (silver) today that has very low resistance, so it acts more like solder than a resistor like graphite or lead based conductive paint.

Then after it's fully dried (take about 15 minutes) then a few dabs of liquid electrical tape to ensure they don't come off.

https://www.mgchemicals.com/product...ctive-acrylic-paints/silver-conductive-paint/

https://www.amazon.com/gp/product/B01MCXW1Y1/

As they say in Dark Souls... "Bloody expensive."

https://www.amazon.com/gp/product/B000QUOOPQ/

pictures from another user using the same paint and tape I ordered.

https://www.overclock.net/threads/how-to-easy-mode-shut-modding.1773959/page-3#post-28663184iunlock likes this. -

Here are some very early runs with the RTX 3080. I had to do a tune up on one of the test benches last night and it took me several hours from cleaning out the water blocks, radiators, flushing everything and everything... phew... it got late fast, but before I called it a night I took a quick spin down the 1/4 mile.

All in all, not bad. Very different characteristics with the 30 series as expected. I've analyzed some data on its behavior and it's quite interesting. The raw performance is pretty nice and it'll benefit those demanding titles a bit, but nothing astronomical.

Next this is going on the main test bench after I gather and record some more data.

It's that time of the year again...

GPU on air with the stock cooler of course.

https://www.3dmark.com/fs/23903049Attached Files:

Rage Set and Robbo99999 like this. -

-

Robbo99999 Notebook Prophet

In fairness, that does sound like quite an easy mod. Take the cooler off and stack the shunts - unlimited power! -

Falkentyne Notebook Prophet

You have to watch out what shunts you use because some cards have 10 amp fuses on the PCIE slot (not sure about the plugs) and some others have 20 amp fuses (on either PCIE slot or on the PCIE 8 pins or on all of them). The Founder's Edition does not have fuses.Robbo99999 likes this. -

The 1080Ti is such a great card still. It's one of the few cards that I've kept as selling it was actually taking a loss for all that the card offers. I have one in a gaming rig in the guest bedroom and the other on a test bench being used as just a GPU to turn on the monitor lol. Sad I know... both water cooled.

You're a monster. Really informative and great posts. It was nice reading familiar content written in detail. Good job.

Really informative and great posts. It was nice reading familiar content written in detail. Good job.

Hey Rage, how's it going buddy? Yea I'm with you... about the VRAM talk I would love for anyone to test DCS (realistic flight sim) as that thing eats up VRAM for lunch and not only that it taxes your system Mem like no other. A large part of the system RAM usage is due to it being built on an older engine with mem leaks etc, but still the raw usage will max out your system memory with Maxed out settings.

*Sorry for the camera shot, it was taken while I was in game real quick with one hand.

If there are any DCS pilots in here, DM me... I'll fly with you.

Here are some screenshots to hopefully motivate someone to try it out LOL... because I really would like to see your RAM usage + VRAM.

This missile shot with the AIM120 from my F15 was pretty epic in taking down a cocky F14 guy that wouldn't stop yapping after him spamming all his AIM-54 Phoenix missiles at me. For those that don't know the AIM-54s from the F14 are very long range, uber fast and hard to dodge.

Formation flying during a competition SATAL 4v4 match. It's serious business lol... a ton of fun.

Oh my way back home returning to base after taking out the enemies AWAC.

Attached Files:

ssj92, Papusan, Charles P. Jefferies and 2 others like this. -

-

Robbo99999 Notebook Prophet

If I do it on my 3080 I'll remember to message you re what to watch out for on my specific card. In reality I'm thinking I'll be leaving hardmods alone whilst the product is in warranty as I won't need the extra say 1-5% performance as I'm at 1080p only. I'm in pre-order for Asus TUF 3080 (non OC) and I think that has a pretty healthy power limit....maybe even a possibility that even with overclocking it won't be bumping up against power limit, but I'll see once I have the card. -

Robbo99999 Notebook Prophet

That new raytracing benchmark, it's one benchmark that scales almost linearly with core count going from 3070 to 3080, so that's good...which means 3080 is nearly 50% faster than 3070 on this task:

https://www.tomshardware.com/uk/news/3dmark-ray-tracing-added

That's a fully ray traced benchmark supposedly.electrosoft, iunlock and Rage Set like this. -

https://www.3dmark.com/3dm/52464254

My best run yesterday.electrosoft, Robbo99999, iunlock and 1 other person like this. -

Robbo99999 Notebook Prophet

2189Mhz average frequency, that's very high, how did you manage that?! Good though, of course. Did you do any mods to your GPU, and cooling?

You did 12% better than the stock 3080:

https://www.tomshardware.com/uk/news/3dmark-ray-tracing-addedTalon likes this. -

Nah the benchmark actually isn't very demanding for the traditional cores or something. It only takes like 300w overclocked like that so it's really easy to cool. My jaw dropped when I saw how far I was able to push the chip for this benchmark. I opened up my windows and ran 100% GPU fan speed.

I need this waterblock! This might be the block I decide to go for with my 10900K and eventual Rocket Lake. 5.7Ghz is crazy at 0 celcius. WTF is is voodoo magic.

Also what is this "beta" Intel XTU with new tuning? Intel with some tricks up their sleeve?Robbo99999 and Papusan like this. -

electrosoft Perpetualist Matrixist

12GB VRAM required for ultra settings....

https://videocardz.com/newz/godfall-requires-12gb-of-vram-for-ultrahd-textures-at-4k-resolution -

AMD sponsored title, I wonder how much AMD paid them to say that? Talking about how smooth the game runs on a Radeon 6000, then shows freezing and stuttering gameplay lol.electrosoft likes this.

*Official* NBR Desktop Overclocker's Lounge [laptop owners welcome, too]

Discussion in 'Desktop Hardware' started by Mr. Fox, Nov 5, 2017.