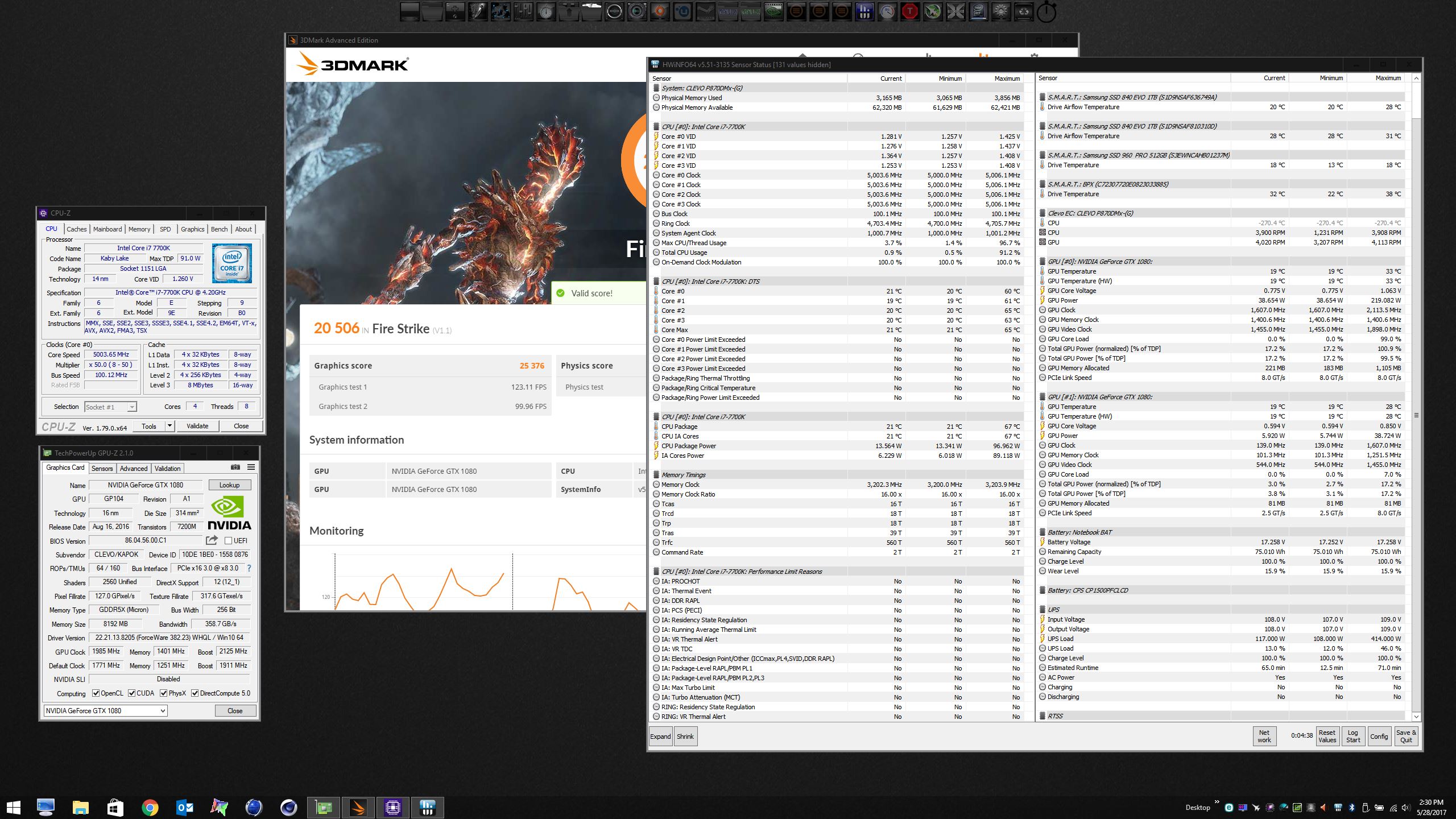

Yeah, I am pretty sure the GPUs are drawing about 100W each more than what the drivers are reporting. Here is single 1080 with over 400W draw. Add another 300W for second GPU in SLI and it matches the over 700W reported by the UPS with SLI. I think this also applies to my response to @D2 Ultima a few minutes ago.

-

-

Here's another example, slightly higher GPU overclock.

Edit: Ignore the EC CPU temperature... I am obviously not using sub-zero cooling. I have those sensors disabled in the BIOS so the EC can't check on the CPU temps. -

http://www.3dmark.com/compare/3dm11/11768495/3dm11/12199335#

Can't break over 50K GPU score no more idk why. I've tried reverting to older drivers and even setting everything to high performance. Hmmm -

You could run 3DM11 with same clocks if yoo have time, just with single graphics. Then we can see the exactly difference.

-

I am getting ready to flash another @Prema vBIOS mod, so maybe later.

Here's an example of what I mentioned about W7 reading different than W10 and Inspector, GPU-Z and HWiNFO64 all reporting different memory clocks, LOL. So, yeah... I think we can stick with the UPS readings for system watts and forget about sensor accuracy for the time being.

-

Oh, so best way to go is a render server or something like that, no?

-

If yoo want max powa... Build a desktop

LOOL. And Aida64?Georgel likes this. -

Be sure g-stink is turned off. Set refresh rate to max instead of application controlled and toggle single-display performance mode, apply, switch back, apply. Not sure why this happens, but it does. I used to have to do the same with 980 GPU when benchmark results were suddenly unexplainably low.Ashtrix likes this.

-

-

Didn't help the score =/

http://www.3dmark.com/3dm11/12199388 -

Yes, it appears you're sucking about 80W or so (give or take) more than the readout is. 96W CPU package power + 300W GPU power + about 18W for system draw and that's a solid 414W right there.

This setting should only apply to OpenGL applications when multiple monitors are enabled. It's supposed to do nothing for DirectX applications like 3DMark. If changing this on/off actually affects your benchmarks, you should probably try to get it shown to (with a good deal of evidence) some website that might care enough to do an article, about how mobile drivers are beyond borked with even seemingly unrelated things being broken. -

25607

And M$ haven't pushed on you a Mickey?

The system will draw more than 18w -

Have you disabled all hardware monitoring software? MSI AB/EVGA precision X/HWiNFO64? And tried turning off hardware monitoring on 3DMark?

-

-

Take a LOOK

-

Yup, none of that is even running. Only 3DM11 is running.

What am I looking for? -

I did not know if you had hardware monitoring software running or not. Bro @Phoenix knocked off, and the score became normal.

-

Oh, yeah I don't have any monitoring enabled. Only 3DM11 running.

I don't think anything in the BIOS should affect GPU score. -

I know now. As Yoo see. All his scores was Crippled first. Even worse than the 3DM11 from Notebookcheck review of 870DM3 + Windoze X

Edit. If you have another OS image. Try it. Even with Windoze X if it's the only one yooo have.Last edited: May 28, 2017 -

Did you disable 3DMark 11's hardware monitoring? Or can only the new suite with firestrike/timespy do this?Mr. Fox likes this.

-

I have a secondary SSD , I'll install 10 on it later, I was going to anyways so I can have a dual boot for timespy.

11 doesn't have any hardware monitoring as far as I know. Fire strike introduced that stuff.D2 Ultima likes this. -

Meaker@Sager Company Representative

Yes make sure it's sitting correctly and making good contact, they do move a bit when securing the socket.Jon Webb likes this. -

Until they get beaten by another man we all know and love, here are some new laptop world records with a @Prema beta vBIOS...

http://www.3dmark.com/fs/12747965

![[IMG]](images/storyImages/goWdAdm.jpg)

http://www.3dmark.com/fs/12747835

![[IMG]](images/storyImages/cMZkx64.jpg)

http://www.3dmark.com/fs/12747806![[IMG]](images/storyImages/M5ksr66.jpg) Johnksss, Papusan, leftsenseless and 5 others like this.

Johnksss, Papusan, leftsenseless and 5 others like this. -

A couple more with the same @Prema beta vBIOS...

http://www.3dmark.com/3dm11/12199758

![[IMG]](images/storyImages/prj8LSN.jpg)

http://www.3dmark.com/3dm11/12199753

![[IMG]](images/storyImages/fCMXyAb.jpg) Johnksss, Papusan, leftsenseless and 2 others like this.

Johnksss, Papusan, leftsenseless and 2 others like this. -

OK, one more for Brother @Prema and Mr. Fox is going night-night. May you all have a peaceful Memorial Day as we reflect on the sacrifices of our military and their families.

http://www.3dmark.com/3dmv/5619399

![[IMG]](images/storyImages/SLeS6bU.jpg)

-

Well done!

Considering we have barely scratched the surface of what is in stock for the firmware, your fun runs are already looking promising!

I have been tormenting brother Fox a bit too much by putting functionality and basic range testing over the performance improvements he's waiting for. Just need to iron out the basics first before adding new bugs to the list by unleashing these Monster too early...

I know that our team boys prefer to put the pedal to the metal right away, even when the engine is still duktaped to the chassis, and can relate to that notion full heartedly... Last edited: May 29, 2017

Last edited: May 29, 2017 -

This is probably a tough one to answer but how many man hours go into Modding a Bios? Between the actual mod and then all the testing it has got to be a ton of time, and cash. Just pulling apart the heat sink and repasting and padding is expensive.

-

When you get your IHS installed can you let me know what your temps look like in comparison to before?

-

Mine have never been better. I'm really glad I got the SKL version for my 7700K on both machines. It helped both of them. The key to good temps (not excellent benchmark scores) is to use ThrottleStop, cap the cache ratio at 42x and lower the cache voltage to something like 1.050 or 1.060V. Sadly, with the KBL BIOS this feature was eliminated. It was possible to set core and cache voltage separately with SKL BIOS. Bear in mind, this is for temps, not number chasing. Doing this lowers my benchmark scores versus having them match (ratio and voltage), but in everything other than number-chasing it doesn't matter.

![[IMG]](images/storyImages/SlnujCf.jpg)

Here are my temps with max fans pushing 4.8GHz. These benchmarks were run in succession.

![[IMG]](images/storyImages/rIftmXE.jpg) Last edited: May 29, 2017

Last edited: May 29, 2017 -

Working on new model code usually takes about a month (sometimes longer) of R&D to get everything right.

Once everything has been figured out applying all tweaks to a new base just a couple of days.

Simply unlocking a BIOS takes only a few minutes, but that's not what the premamod project is about. -

A month or more is one Hell of a lot of work. DAMM!

-

Woah

That is quite a lot of work!!

I hope you're also having fun while doing this!! -

Meaker@Sager Company Representative

I bet it was more fun when it was not expected

-

I need to re-watch your Throttle Stop videos. I don't have Throttle Stop running now. I just use XTU. I've got to play around with this thing a bit. I also have to watch your video on Windows 10. I was worried that my architecture and estimating software would force me to upgrade soon, so I went with Windows 10 Pro but I see they're still selling new computers with Windows 7. I can't see that going anywhere soon.Mr. Fox likes this.

-

The way yooo should apply Liquid metal. Maybe he use too little?

Ashtrix, TBoneSan, Mr. Fox and 1 other person like this. -

Not to shabby for a lil 15" i rekon

total 7008 | gfx 7666 | cpu 14817 | cmb 2880

http://www.3dmark.com/3dm/20154625

880m @1200/3000 (factory 993/2500)

6700k @ 4600

OE samsung ram @ 2400

The above is all the poor little 240w power supply can take, with the cpu at 4.7 instant shutdown at the combined stage of the FS run.

@ 4.7 Cinebench R15 runs are doable

@ 4.8 Cinebench R15 drives the thermals to shutdown, but its 'game & browsing stable' (with full retard temps to suit!)

330w PS and delid kit on the way

Opinions wanted from the god fathers if they could be so kind. Im on the fence between going 980m or putting the $ into a later model p75 with 1070 support. Decision is based entirely on single *critical* factor.. which card could potentially draw more power from the wall! e.g. higher the power draw the better!

![[IMG]](images/storyImages/ouSx6as.jpg) Last edited: May 30, 2017

Last edited: May 30, 2017 -

Back to the power draw thing, where are GPU readouts coming from? Total board draw? After vrms? Core only? (losses from vrms, vram consumption may account for "missing" power, also system ram, screen, cpu vrm losses, etc)

-

So, to share the horrible news: http://www.pcworld.com/article/3198...1080-graphics-in-a-thin-and-light-laptop.html

bloodhawk, temp00876, Ionising_Radiation and 1 other person like this. -

-

sharing this news too

http://wccftech.com/asus-rog-strix-notebook-amd-ryzen-7-8-core-cpu/ -

One less reason for Clevo not to give us a higher core monster... Still have to prove it will sell. Also, why they didn't pair it with a 1080 is beyond me...

Sent from my SM-G900P using Tapatalk -

Nice, right up there with John's world-record:

http://www.3dmark.com/compare/fs/1655416/fs/10594710

Contractual exclusivity...as our resident lawyer you know how that works.Last edited: May 30, 2017tranquil911, Johnksss, jaybee83 and 3 others like this. -

I heard some rumor about nVidia not wanting their cards with Ryzen in mobile, does it hold any water?

I know for a fact that nVidia drivers very much dislike Ryzen CPUs, especially in DirectX 12. Doesn't seem like they're busy working on compatibility even though a CPU should be completely agnostic to one's GPU choice.Ionising_Radiation, jaybee83 and ajc9988 like this. -

Then they need to up their driver support!! Nvidia will push out their Battle BoxAshtrix, Ionising_Radiation and temp00876 like this.

-

You don't know how true that statement is. In fact, here's some proof.

AMD - DX11 worst perf

nVidia - DX11 second best perf

AMD - DX12 best perf

nVidia DX12 - second worst perf

Ain't that a kick to the head? There's sometimes over 60fps difference between DX12 AMD and DX11 nVidia.

Also, this is also very interesting

Considering the piss-poor scaling in ROTTR (maximum about 47% in SLI in DX12, at GPU bottleneck territory too) this is incredible. -

Nice. Expect a Hell lot people will be damn angry on Nvidia if they will be screwed with performance from the advertised Nvidia's Battle box and Ryzen

I mean it's nice for people that Nvidia now has opened up for Ryzen in their own playground aka their Box. Everything will hit back on Nvidia

I mean it's nice for people that Nvidia now has opened up for Ryzen in their own playground aka their Box. Everything will hit back on Nvidia

If the performance really sucks!!

If the performance really sucks!!

Clevo Overclocker's Lounge

Discussion in 'Sager/Clevo Reviews & Owners' Lounges' started by Spartan@HIDevolution, Mar 4, 2016.

![[IMG]](images/storyImages/E6p6yl5.jpg)

![[IMG]](images/storyImages/VuCK0WB.jpg)

![[IMG]](images/storyImages/7FQhJzg.jpg)